%%{init: {"theme":"base","themeVariables":{"fontFamily":"Inter, system-ui, sans-serif","fontSize":"16px","primaryColor":"#eef2f7","primaryTextColor":"#0f172a","lineColor":"#475569","tertiaryColor":"#e2e8f0"},"flowchart":{"curve":"basis","nodeSpacing":30,"rankSpacing":50}}}%%

flowchart LR

P["Prompts"] --> M["SFT Model πθ"]

M --> RA["Response A"]

M --> RB["Response B"]

RA --> H["Human Rater"]

RB --> H

H -->|"A ≻ B"| RM["Reward Model rθ"]

RM -->|"r(x,y)"| PPO["PPO"]

PPO -->|"Update πθ"| M

From Pretrained Model to Deployed Assistant

Alignment, Decoding, and Distillation

2026-02-12

Sources

Content derived from: J&M Ch. 8 (Transformers), Ch. 7 (Large Language Models)

Administrivia

- Project checkpoints due Today!

Learning Objectives

By the end of this lecture, you will be able to:

Explain the difference between training-time, inference-time, and deployment-time control in LLMs

Implement and compare decoding algorithms

Describe how alignment (SFT, RLHF, DPO) modifies model behavior

Explain how distillation enables efficient deployment

Today’s roadmap

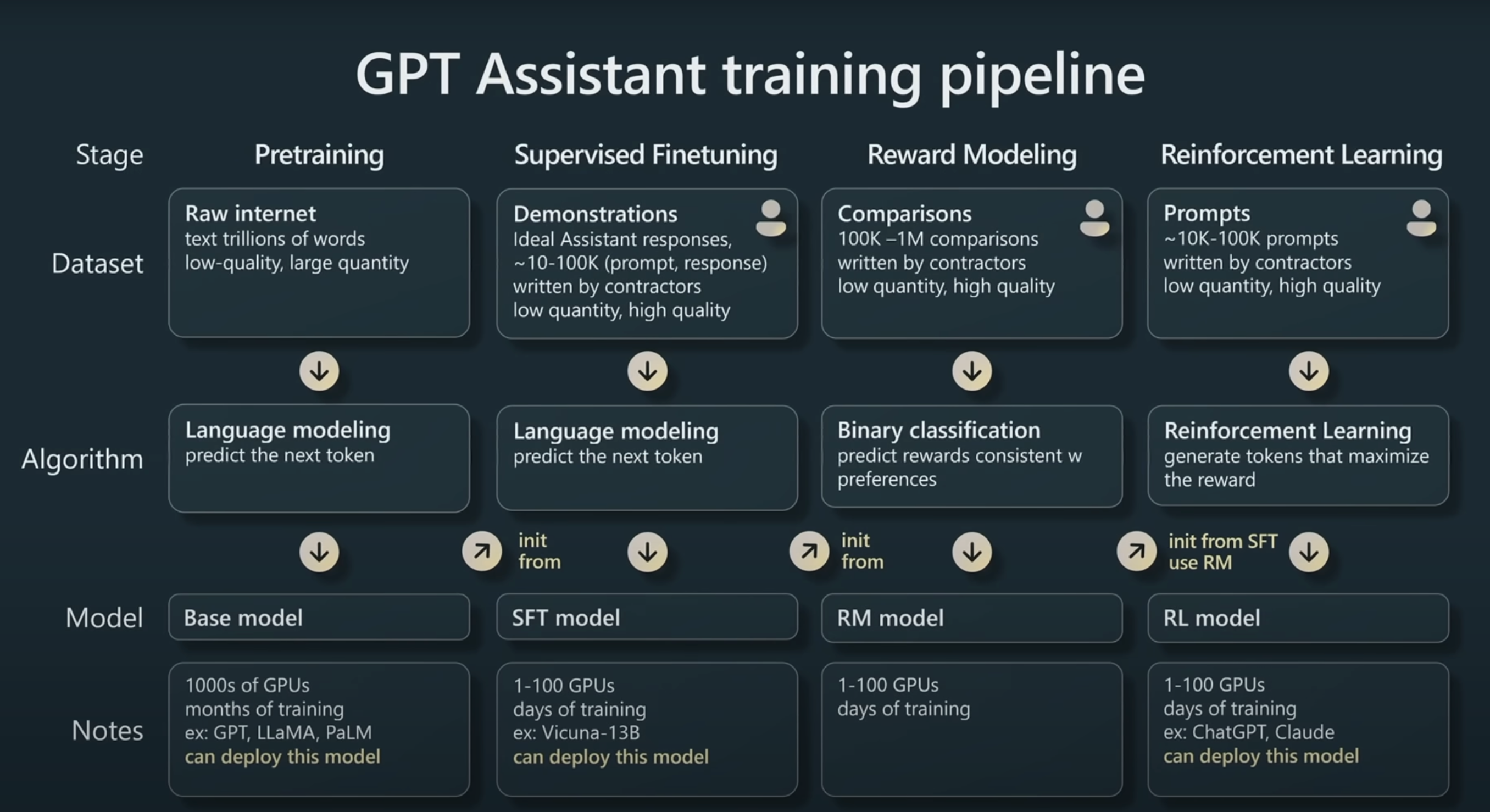

Karpathy (2023): GPT Assistant Training Pipeline

Last time: Pretraining (Stage 1). Today: everything after.

- Part 5: Instruction Tuning (SFT) — teaching the model to follow instructions

- Part 6: RLHF & DPO — aligning with human preferences

- Part 7: Decoding Algorithms — how LLMs actually generate text

- Part 8: Knowledge Distillation — deploying smaller models

Part 5: Instruction Tuning

Base models complete text — they don’t follow instructions

Base Model

Continues the article instead of summarizing

Instruction-Tuned Model

Actually summarizes as instructed

Supervised Fine-Tuning (SFT) on instruction-response pairs

Training data format:

SFT objective:

\[ \mathcal{L}_{\text{SFT}} = -\sum_{i=1}^{N} \log P_\theta(y_i \mid x_i) \]

- \(x_i\): instruction (input)

- \(y_i\): desired response (output)

- Same cross-entropy loss, but on curated instruction data

Instruction tuning: Implementation details

Formatting data for instruction-tuned models:

# For summarization with instruction-tuned models

def prepare_for_summarization(article, tokenizer):

prompt = f"Summarize the following article.\n\n{article}\n\nTL;DR:"

return tokenizer(prompt, return_tensors="pt")

# System prompts set the model's "persona"

system_prompt = "You are a helpful assistant and an expert at summarizing articles."Fine-tuning with label masking (for training summarization models):

Large models are expensive to deploy

We want similar quality at lower cost.

Preview: Knowledge Distillation (Part 8)

We can train a smaller “student” model to mimic the large model’s behavior — getting most of the quality at a fraction of the cost.

Before turning to efficiency, we’ll address a deeper limitation of SFT: It only learns from one correct answer per instruction. Can we do better?

Part 6: Preference Alignment with RLHF

Why RLHF? SFT isn’t enough

- One "correct" answer per instruction

- Can't encode preferences between valid responses

- Expensive to write high-quality responses

- May learn to imitate surface patterns

- Captures human preferences between options

- Easier to judge than to write

- Learns subtle quality distinctions

- Optimizes for what humans actually want

Key insight: It’s easier to say “A is better than B” than to write the perfect A.

RLHF: The three-step process

RLHF data flow

The reward model is a learned proxy for human judgment — it scores responses so we don’t need a human in the loop at training time.

Reward model intuition: Learning from comparisons

"Gravity is like an invisible magnet in the Earth that pulls everything down — that's why when you jump, you come back!"

"Gravity is a fundamental force described by general relativity as the curvature of spacetime caused by mass-energy..."

P(A ≻ B) = σ(2.4 − 0.8) = σ(1.6) ≈ 0.83

This intuition leads to the Bradley-Terry model →

Training the reward model

Bradley-Terry preference model:

\[ P(A \succ B) = \sigma(r_\theta(x, y_A) - r_\theta(x, y_B)) \]

Reward model loss:

\[ \mathcal{L}_{\text{RM}} = -\mathbb{E}_{(x, y_w, y_l)} \left[ \log \sigma(r_\theta(x, y_w) - r_\theta(x, y_l)) \right] \]

- \(y_w\): preferred (winning) response

- \(y_l\): dispreferred (losing) response

- \(\sigma\): sigmoid function

The reward model learns to assign higher scores to preferred responses.

Optimizing the LLM with reinforcement learning

Objective:

\[ \max_\theta \mathbb{E}_{y \sim \pi_\theta(y|x)} [r(x, y)] - \beta \cdot \text{KL}[\pi_\theta \| \pi_{\text{ref}}] \]

- PPO (Proximal Policy Optimization) is commonly used

- KL penalty prevents “reward hacking”

From RLHF to DPO: What changes?

- Train a separate reward model

- Run PPO optimization loop

- Complex, unstable training

- Three separate stages

- No reward model needed

- No RL loop required

- Directly optimize preference pairs

- Standard supervised learning

Key insight: Same preference data, dramatically simpler training.

Alternatives to RLHF: Direct Preference Optimization

Key insight: The optimal RLHF policy has a closed-form relationship with the reward model. DPO exploits this to optimize directly on preference pairs — no reward model or RL needed.

DPO loss (Rafailov et al., 2023):

\[ \mathcal{L}_{\text{DPO}} = -\mathbb{E}\left[\log \sigma\left(\beta \log \frac{\pi_\theta(y_w|x)}{\pi_{\text{ref}}(y_w|x)} - \beta \log \frac{\pi_\theta(y_l|x)}{\pi_{\text{ref}}(y_l|x)}\right)\right] \]

Reading the formula: increase the probability of the preferred response \(y_w\) (relative to the reference model) and decrease the probability of the dispreferred response \(y_l\).

Alignment goals: Helpful, Harmless, Honest

Completes requested tasks

Provides useful information

Avoids harmful content

Doesn't assist malicious use

Doesn't make things up

Corrects mistakes

Tension

These goals can conflict: A helpful answer to a harmful request is itself harmful.

Concept check

Quick questions (think for 30 seconds):

- In the RL objective, what happens if we set β = 0?

- An RLHF-trained chatbot starts adding “As an AI language model…” to every response. What went wrong?

RL Objective reminder: \[ \max_\theta \mathbb{E}_{y \sim \pi_\theta(y|x)} [r(x, y)] - \beta \cdot \text{KL}[\pi_\theta \| \pi_{\text{ref}}] \]

From training to generation

We’ve covered how LLMs are trained to be helpful:

- Pretraining — learn language from raw text

- SFT — learn to follow instructions (Part 5)

- RLHF/DPO — align with human preferences (Part 6)

Now: how do they actually generate text?

Given a trained model and a prompt, how do we produce an output — one token at a time?

But note: what you put in matters as much as how tokens come out. We’ll cover the output side today (decoding), and the input side next time (prompting, in-context learning, reasoning).

Three control knobs in LLM systems

- SFT

- RLHF

- DPO

- Greedy

- Temperature

- Top-k / Top-p

- Knowledge distillation

These mechanisms operate on different axes but interact in final behavior.

Training vs. inference: Different levers

- Alignment modifies the token distribution

- Changes relative likelihoods

- Decoding determines which tokens get chosen

These interact in practice:

- Greedy decoding can amplify repetitive artifacts from training

- High temperature increases hallucination risk regardless of alignment

- Beam search often reduces diversity even in well-aligned models

Part 7: Text Generation and Decoding

The autoregressive generation loop

def generate(model, prompt_ids, max_new_tokens=50):

input_ids = prompt_ids

for _ in range(max_new_tokens):

# Forward pass: get logits for all positions

logits = model(input_ids) # (B, seq_len, vocab_size)

# Extract logits for the LAST position only

next_token_logits = logits[:, -1, :] # (B, vocab_size)

# DECODE: Select next token (this is where methods differ!)

next_token = decode(next_token_logits)

# Append and continue

input_ids = torch.cat([input_ids, next_token.unsqueeze(1)], dim=1)

return input_idsThe decode() function is where greedy, sampling, top-k, and top-p differ!

Decoding as search in sequence space

Generating text is approximate search over an exponential space.

Why does the decoding method matter?

Prompt: “The city of Paris is”

The challenge: find the sweet spot between coherence and diversity.

Greedy decoding: Always pick the most likely token

\[\hat{w}_t = \arg\max_{w} P(w \mid w_1, \ldots, w_{t-1})\]

- Deterministic (reproducible)

- Fast (no sampling)

- Often coherent

- Repetitive outputs

- No diversity

- Can get stuck in loops

Vanilla sampling: Sample from the distribution

\[w_t \sim P(w \mid w_1, \ldots, w_{t-1})\]

softmaxconverts logits to probabilitiesmultinomialsamples from the categorical distribution- Each run produces different output

Problem with Pure Sampling

Low-probability tokens can still be selected, leading to incoherent text:

“The capital of France is accordion…”

Temperature sampling: Control randomness

\[P_T(w) = \frac{\exp(z(w)/T)}{\sum_{w'} \exp(z(w')/T)}\]

| Temperature | Effect | Distribution |

|---|---|---|

| T → 0 | More deterministic | Approaches greedy |

| T = 1 | Original distribution | Standard sampling |

| T > 1 | More random | Flatter distribution |

Temperature effects visualized

Top-k sampling: Only consider the k best tokens

def topk(logits, k=50):

"""Sample from only the top k tokens"""

# Get top k logits and their indices

top_logits, top_indices = torch.topk(logits, k, dim=-1)

# Sample from the top k

probs = F.softmax(top_logits, dim=-1)

sampled_idx = torch.multinomial(probs, num_samples=1)

# Map back to vocabulary indices

return torch.gather(top_indices, -1, sampled_idx).squeeze(-1)Idea: Truncate the long tail of unlikely tokens, then sample from the rest.

k = 1: Equivalent to greedy decoding

Visualizing truncation: top-k vs top-p

Same distribution, different truncation:

.40

.25

.15

.10

.05

.05

.40

.25

.15

.10

.05

.05

Top-k misses “an” (10% probability) because it always takes exactly k. Top-p includes it because 90% mass hasn’t been reached yet.

Top-p (nucleus) sampling: Adaptive truncation

Idea: Keep the smallest set of tokens whose cumulative probability ≥ p

Algorithm (Holtzman et al., 2020):

- Sort tokens by probability (highest first)

- Compute cumulative probabilities

- Find the cutoff where cumulative probability exceeds p

- Zero out all tokens beyond the cutoff

- Sample from the remaining tokens

Top-p implementation

def topp(logits, p=0.9):

"""Nucleus sampling: dynamic truncation based on cumulative probability"""

# Step 1: Sort by probability (descending)

sorted_logits, sorted_indices = torch.sort(logits, descending=True, dim=-1)

sorted_probs = F.softmax(sorted_logits, dim=-1)

# Step 2: Compute cumulative probabilities

cumsum_probs = torch.cumsum(sorted_probs, dim=-1)

# Step 3-4: Find and apply cutoff

sorted_indices_to_remove = cumsum_probs > p

# Tricky: shift right so we INCLUDE the token that crosses p

sorted_indices_to_remove[..., 1:] = sorted_indices_to_remove[..., :-1].clone()

sorted_indices_to_remove[..., 0] = False # Always keep at least one token

sorted_logits[sorted_indices_to_remove] = float('-inf')

# Step 5: Sample from remaining tokens

probs = F.softmax(sorted_logits, dim=-1)

sampled_idx = torch.multinomial(probs, num_samples=1)

return torch.gather(sorted_indices, -1, sampled_idx).squeeze(-1)The right-shift trick (lines 8–9)

Without the shift, we’d exclude the token that crosses the threshold. The shift ensures the nucleus always covers at least p probability mass.

Top-p example: Adaptive behavior

cumsum: [0.70, 0.85, 0.93, 0.97, 1.0]

cumsum: [0.25, 0.45, 0.63, 0.78, 0.90, 1.0]

Key advantage over top-k: The “effective k” adapts to the distribution!

Concept check

Quick questions (think for 30 seconds):

- What happens to entropy as temperature increases?

- When does top-k approximate top-p?

- Why might greedy decoding increase repetition?

Comparing decoding methods

| Method | Diversity | Coherence | Deterministic? | Best for |

|---|---|---|---|---|

| Greedy | Low | High | Yes | Code, factual QA |

| Temperature (low) | Low | High | No | Slight variation |

| Temperature (high) | High | Low | No | Brainstorming |

| Top-k | Medium | Medium-High | No | General use |

| Top-p | Medium | High | No | Dialogue, creative |

In practice: Top-p (p=0.9) with temperature (t=0.7-0.9) is a common default.

What about beam search?

Beam search keeps the top-B most likely sequences at each step (not just tokens).

- Machine translation

- Summarization

- Tasks with a single "correct" output

- Tends to produce bland, generic text

- Maximizes likelihood ≠ maximizes quality

- Sampling methods preferred for dialogue

Modern LLM APIs (ChatGPT, Claude) use sampling-based methods, not beam search.

Generation evaluation metrics

Perplexity (lower = better): \[\text{PPL} = \exp\left(-\frac{1}{N}\sum_{i=1}^N \log P(w_i \mid w_1, \ldots, w_{i-1})\right)\]

"How surprised is the model?"

(e.g., CoLA classifier)

(higher = more varied)

How decoding methods affect metrics

| Method | Perplexity | Fluency | Diversity |

|---|---|---|---|

| Greedy | Best | High | None |

| High temperature | Worse | Lower | High |

| Top-p (p=0.9) | Good | High | Good |

Top-p gives the best balance — good perplexity and diversity without sacrificing fluency.

Part 8: Knowledge Distillation

From research model to production system

Distillation is deployment engineering — it doesn’t change what the model knows, but makes it practical to serve.

Knowledge distillation: training smaller models from larger ones

Core idea: A small “student” model learns to mimic a large “teacher” model

High quality

Slow inference

Good quality

Fast inference

Why soft labels? They encode richer information

Hard label (one-hot): \[P(\text{next token}) = [0, 0, 1, 0, 0, \ldots]\]

Soft label (teacher distribution): \[P(\text{next token}) = [0.01, 0.15, 0.60, 0.12, 0.08, \ldots]\]

everything else: 0.0

floor: 0.15, couch: 0.08...

The distillation loss combines hard and soft targets

Training objective:

\[ \mathcal{L}_{\text{distill}} = \alpha \cdot \mathcal{L}_{\text{soft}} + (1-\alpha) \cdot \mathcal{L}_{\text{hard}} \]

where:

\[ \mathcal{L}_{\text{soft}} = -\sum_i P_T^{(\tau)}(i) \log P_S^{(\tau)}(i) \]

\[ P^{(\tau)}(i) = \frac{\exp(z_i / \tau)}{\sum_j \exp(z_j / \tau)} \]

- \(\tau\) = temperature (same concept as Part 7! Higher → softer, reveals more structure)

- \(P_T\) = teacher probability, \(P_S\) = student probability

- \(\alpha\) balances learning from teacher vs. ground truth

Distillation training loop

# Knowledge distillation training loop (simplified)

for articles, reference_summaries in dataloader:

# Teacher generates soft labels (no gradient needed)

with torch.no_grad():

teacher_logits = teacher_model(articles, reference_summaries)

# Student predicts

student_logits = student_model(articles, reference_summaries)

# Soft loss: match teacher's distribution (with temperature)

soft_loss = F.kl_div(

F.log_softmax(student_logits / tau, dim=-1),

F.softmax(teacher_logits / tau, dim=-1),

reduction="batchmean"

) * (tau ** 2) # Scale factor corrects for temperature

# Hard loss: standard cross-entropy on ground truth

hard_loss = F.cross_entropy(student_logits, reference_summaries)

# Combined loss

loss = alpha * soft_loss + (1 - alpha) * hard_loss

loss.backward()Distillation in practice: notable examples

| Student Model | Teacher | Key Benefit |

|---|---|---|

| DistilBERT | BERT-base | 40% smaller, 60% faster, 97% performance |

| TinyLlama (1.1B) | LLaMA-7B | Mobile-friendly, strong performance |

| Gemma 2B | Larger Gemma | Efficient on-device deployment |

| Phi-3 | GPT-4 (synthetic data) | High capability in small package |

In Assignment 3: You’ll use Qwen-1.5B (teacher) to generate synthetic summaries, then fine-tune GPT-2 124M (student) on them.

Summary: From pretrained model to deployed assistant

We traced the full path from a pretrained LLM to a deployed assistant:

- Instruction Tuning (SFT): Teach the model to follow instructions using curated data

- RLHF / DPO: Align with human preferences using pairwise comparisons

- Decoding: Generate text with greedy, temperature, top-k, or top-p sampling

- Knowledge Distillation: Compress large models into smaller, deployable students

Tip

These are three independent control axes that together determine real-world model behavior. (Training, Inference, and Deployment)

Coming up next

We built the model and learned how it generates text. But how you use it matters enormously:

- Prompting strategies: Zero-shot, few-shot, and chain-of-thought

- In-context learning: How LLMs learn from examples in the prompt — without any gradient updates

- Reasoning: Can LLMs solve multi-step problems? When and why do they fail?

Next: We control the input (prompting and reasoning)

CSE 447/517 26wi - NLP